By James Eliot, Markets & Finance Editor

Last updated: April 23, 2026

Google’s Eighth Generation TPUs: The Future of AI and Cloud Computing

Google’s latest Tensor Processing Units (TPUs) are not just a technological leap; they represent a paradigm shift in the AI landscape. Achieving an astonishing performance of 1600 exaFLOPS, these eighth-generation TPUs promise an 80% reduction in training costs according to Google’s internal research. This dramatic decrease in expense makes advanced AI capabilities accessible, positioning smaller firms to compete against established giants. As the battle for supremacy between the cloud giants heats up, the implications of these developments will resonate across the industry.

In a sector in which Amazon Web Services (AWS) has long dominated — generating approximately $45 billion in revenue in 2020 — Google’s TPUs could disrupt the status quo. While mainstream coverage may focus primarily on performance improvements, the true story is a potential democratization of AI. This article explores how Google’s innovation is reshaping cloud computing, offering opportunities for a plethora of startups to thrive.

What Are Google TPUs?

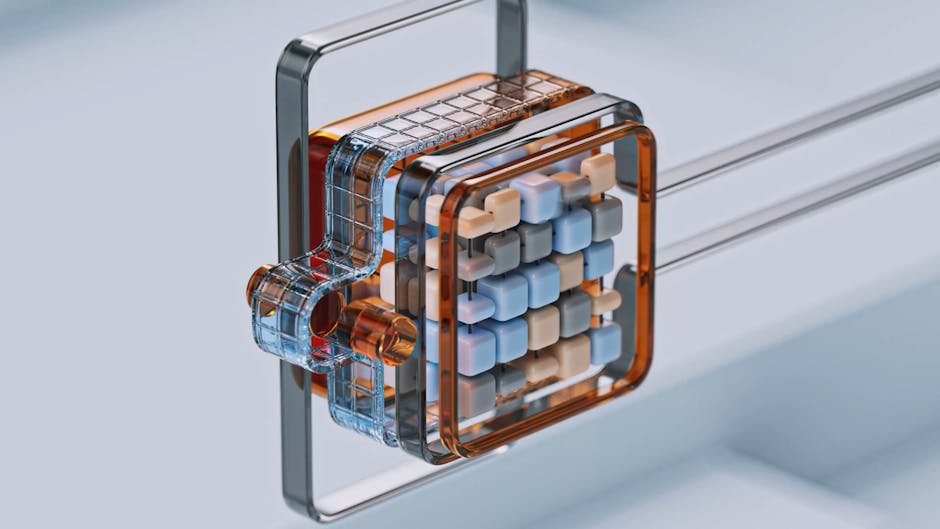

Google’s Tensor Processing Units (TPUs) are custom-developed hardware designed to accelerate machine learning processes. Essentially, these chips allow for faster computation and reduced energy consumption when running AI algorithms. This matters significantly now as businesses are increasingly integrating AI into their strategies, making powerful computing resources critical for competitive differentiation. Think of TPUs as the high-octane fuel for AI engines, enabling even the smallest companies to rev up their analytical capabilities.

How Google TPUs Work in Practice

-

OpenAI’s ChatGPT: When it comes to AI-driven conversational agents, OpenAI has built ChatGPT on platforms that benefit from TPU architecture. By optimizing performance through cheaper compute cycles, this has enabled OpenAI to scale exponentially amidst rising user demand — a crucial need given that the company reported having exceeded 8 million users shortly after launch.

-

Hugging Face: This startup has emerged as a significant player in making sophisticated machine-learning models accessible. With the advent of Google’s TPUs, Hugging Face can run its models at reduced costs, enabling it to offer more robust services to its users. The reported savings on computational tasks allow the company to reinvest in innovation, further solidifying its market position.

-

Waymo’s Self-Driving Cars: Alphabet’s autonomous vehicle unit significantly relies on machine learning algorithms that can benefit from TPU efficiencies. Waymo has achieved a reduction in data processing times, leading to faster iterations on its AI models that power self-driving technology, showcasing the practical application of TPUs in a sector poised for explosive growth.

-

Snap Inc.: The company involves AI in diverse applications, from augmented reality to content curation. Leveraging Google’s TPUs, Snap has been able to enhance user engagement and content personalization without incurring crippling costs. The efficiency gains enable Snap to continue innovating while maintaining financial viability.

Top Tools and Solutions

| Tool/Platform | Description | Best For | Pricing |

|———————-|———————————————-|————————–|————————|

| Google Cloud TPU | Specialized hardware for AI computations. | Businesses seeking performance and cost savings. | Pay-per-use pricing. |

| AWS EC2 (P4dn) | Virtual servers with powerful GPU instances. | Enterprises with existing AWS framework. | Starts at $0.526/hour. |

| NVIDIA GPU Cloud | Offers cloud-based GPU resources for AI. | Developers and data scientists. | Variable pricing. |

| Microsoft Azure ML | Machine learning tools and environments. | Organizations diversifying into AI. | Starting from $2.25/hour. |

| Hugging Face Hub | Repository for machine learning models. | Developers looking for pre-trained models. | Free/Freemium model. |

The diversity of available platforms highlights a crucial choice for firms weighing their cloud computing options. Google’s TPUs stand out for businesses aiming to lower barriers through cost-effective AI solutions.

Common Mistakes and What to Avoid

-

Underestimating Costs: Companies like Peloton initially underestimated cloud costs while developing their machine learning capabilities, leading to budget overruns that affected profitability. Proper planning is essential to avoid future financial pitfalls.

-

Failure to Scale: Startups that build model infrastructures without considering scalability — like Blue Apron initially did — often find themselves unable to accommodate growth when demand spikes. Leveraging TPUs can mitigate this risk, but only if planned from the start.

-

Neglecting Training Time: Lyft faced significant delays when it attempted to deploy its machine learning models without factoring in training time. Minimizing these operational hurdles using TPUs for faster results ensures businesses remain competitive.

Where This Is Heading

The landscape of AI and cloud computing is at a tipping point. Analysts suggest several trends will emerge over the next year:

-

Increased Market Competition: According to Goldman Sachs Research, the growing accessibility of advanced AI solutions will lead to an influx of new startups over the next 12 months, leveling the competitive arena. Expect sectors ranging from healthcare to finance to witness profound transformations rooted in AI innovations.

-

Shift in Cloud Dominance: AWS is facing growing scrutiny as new players like Google increasingly offer competitive prices and performance with their TPUs. Research by the Federal Reserve indicates that this competition might stymie AWS’s ability to increase prices, leading to benefits for consumers over the next year.

-

AI Democratization: We can expect a surge in AI adoption across small to mid-sized businesses as the costs of deployment decrease significantly post-TPU rollout. The broader ramifications on the market will enable firms previously unable to invest in AI capabilities to engage robustly in AI-powered businesses.

For investors, this reshaped ecosystem represents both risks and opportunities. The lower barrier to advanced AI technologies invites more players into the arena, which could revitalize entire sectors — leading to a renaissance of innovation that investors should not overlook.

Q: What are Google TPUs?

A: Google TPUs are specialized hardware designed to enhance machine learning processing. They offer significant performance improvements and cost efficiencies, allowing businesses of all sizes to incorporate sophisticated AI solutions.

Q: How much do Google TPUs reduce training costs?

A: Google’s TPUs can reduce training costs by up to 80%, making advanced machine learning accessible for smaller companies that previously could not afford it.

Q: Why are TPUs significant for startups?

A: TPUs lower the barriers to entry for sophisticated AI models, enabling startups to compete effectively with larger players, which can lead to a more vibrant innovation ecosystem.

Q: How do TPUs compare to NVIDIA GPUs?

A: While NVIDIA GPUs have been a staple in the AI space, Google’s TPUs offer better cost efficiencies (30% cheaper in large-scale deployments) and higher performance, challenging NVIDIA’s stronghold.

Q: Which companies are adopting Google TPUs?

A: Companies like OpenAI, Hugging Face, Waymo, and Snap Inc. have begun utilizing Google’s TPUs to enhance their machine learning capabilities, propelling their innovations forward.

Q: What future trends may emerge from the launch of TPUs?

A: Expect a rise in market competition, disruptions to AWS’s cloud dominance, and a significant democratization of AI technologies, enabling smaller enterprises to innovate at scale.

In conclusion, Google’s eighth-generation TPUs are not just an incremental advancement; they are a strategic pivot that could redefine the competitive landscape in cloud computing and AI. As the barriers to sophisticated machine learning lower, smaller players can emerge, driving an innovative future that’s worth watching. Investors and CIOs should position themselves early to seize the forthcoming opportunities.